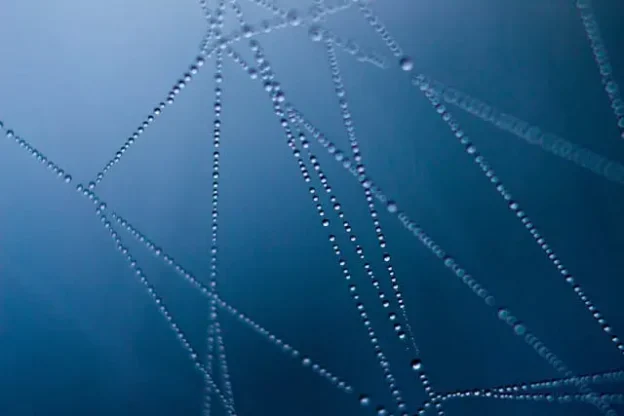

There’s nothing more frustrating for a webmaster than having to wait to be crawled. After you perfect your on-site SEO, create great content, and ensure a spider-friendly link structure, you can only wait. You wait for the search engine crawlers to visit.

Google will crawl your site at a frequency it determines, and while you can use Google Webmaster Tools to set a crawl rate, that will just limit crawls to a rate below the one you specify to prevent your server from getting hammered. It won’t encourage Googlebot to pay a visit any more frequently if its algorithms decide that there is no need. There are, however, methods you can use to speed up the bots crawl rate, and today we’ll have a look at 4 of them.

(more…)