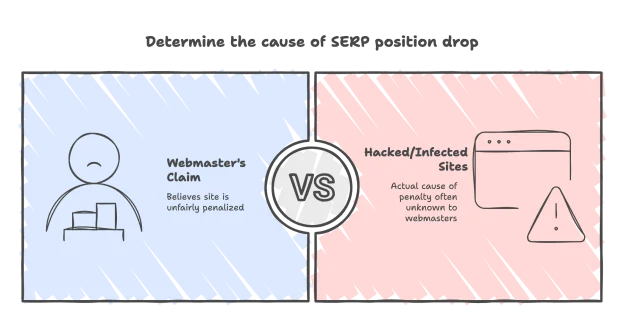

In a recent blog entry, Matt Cutts discusses a common response of sites that have been delisted or had their SERP position drop. Webmasters say that there’s nothing wrong with their site, that they haven’t been engaged in any shady link-building strategies, and Google is unfairly punishing them. Cutts responds that in many of these cases the reason for the penalty is that sites have been hacked and infected with malicious software without webmaster being aware.

Hacking a site is one of a number of Negative SEO strategies that a site’s competitors can engage in to damage search rankings and reputations. Today we’ll be having a look at hacking and a couple of other Negative SEO tactics, so that you can be aware of possible vectors of attack for your sites, and what you can do about them.